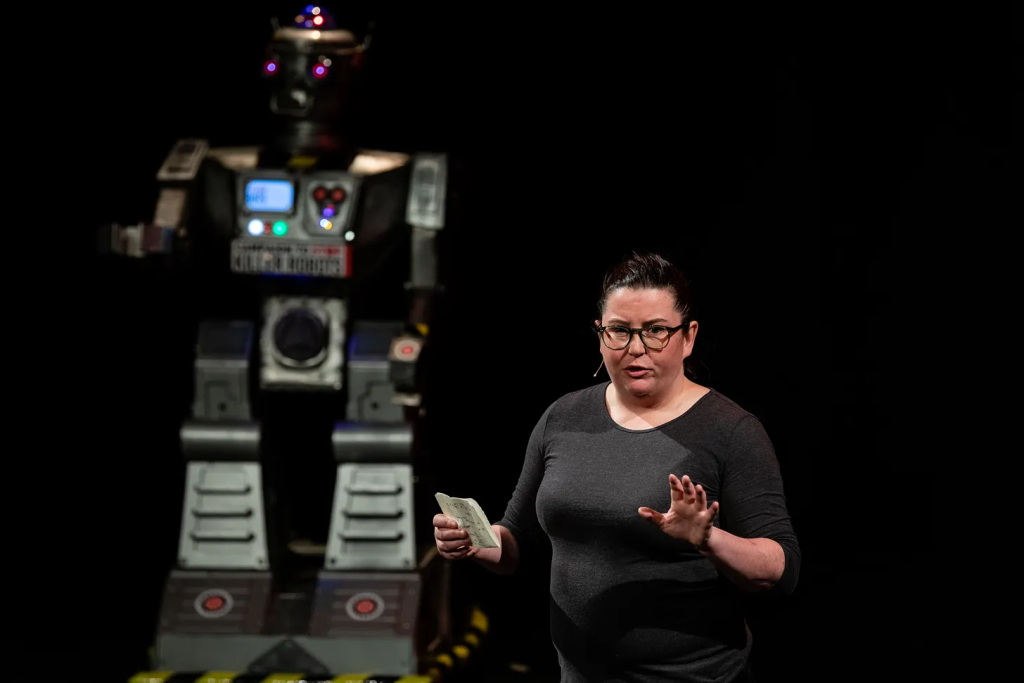

Laura Nolan left Google in 2018 over Project Maven. Now, she reflects on advancements in military AI and recent employee pushback within the tech giant.

By Laura Nolan

This artical is a repost from the Stop Killer Robots Substack

I left Google in 2018 after learning of its involvement in Project Maven. This US Department of Defense programme applied machine learning to aerial surveillance imagery in order to identify potential targets as part of the military kill-chain. At the time, I, and many other Google software engineers, were deeply concerned about what happens when systems designed for object identification and classification are embedded into lethal decision chains, and how difficult it becomes to maintain meaningful human oversight once they are scaled.

Many of the same concerns my colleagues and I had eight years ago are echoed in the recent letter current Google employees sent their CEO Sundar Pichai last week.

A great deal of internal discussion at the time centred on whether clear boundaries could be maintained: can “AI for protecting our troops” be separated from “AI for targeting at scale”, and whether internal review mechanisms and ethical principles would be sufficient to prevent downstream misuse. Those questions have not gone away.

What has changed is that we now have significantly more empirical evidence about how these systems behave and are causing harm in practice.

Since 2018, the broader geopolitical environment has changed. Active conflicts in Ukraine, Gaza, and Sudan have demonstrated both the increasing role of data-driven systems in warfare and the speed at which operational cycles can compress under pressure. At the same time, international norms around proportionality, distinction, and civilian protection remain formally in place, but are under increasing strain in practice.

Machine learning systems have been increasingly integrated into military intelligence and targeting workflows. Much of this has been described as decision support: systems that surface, rank, or prioritise information for human review. In practice, however, these systems do much more than support decisions: they generate decisions rapidly which are then rubber-stamped by human operators.

We have seen this in multiple recent conflicts. In Gaza, reporting by +972 Magazine and others describes systems like Lavender and the Gospel used by the Israeli military to generate large volumes of potential Palestinian targets based on statistical correlation, behavioural inference, and pattern-of-life analysis. Similar techniques have been discussed in other contexts, including Ukraine, where AI-assisted tools have been used for surveillance analysis and identification tasks under conditions of high operational tempo.

These systems are not deterministic. They produce probabilistic outputs, often with implicit uncertainty. In real conflict environments, those machine-generated outputs are frequently treated as sufficiently reliable to act upon, particularly when human operators are under time pressure and dealing with large volumes of incoming information.

Each individual decision may still formally involve a human operator. However, the system defines the set of options, their ordering, and the rate at which they are presented. At that point, the human role often shifts from independent assessment to validation within a pre-filtered queue of machine-generated suggestions.

This is where the concept of “rubber-stamping” becomes relevant. While each individual decision may still formally involve a human operator, there is a well-documented tendency for human operators to defer to system outputs when workload is high and time is limited.

+972 article that broke the news about the AI targeting in Gaza in 2023

When a system produces hundreds or thousands of prioritised alerts or candidate targets, as we recently saw with the thousands of targets generated at speed by the US and Israel in Iran, meaningful per-item scrutiny does not scale. The human operator is still present, but their function becomes procedural rather than analytical.

There is also a related phenomenon sometimes described as autonomy creep. Systems introduced as advisory or assistive gradually become embedded in decision pathways in ways that increase their influence over outcomes. A model used to flag potentially relevant entities may later be used to prioritise them. A prioritisation system may later be used to generate action recommendations. At each stage, the change may appear incremental, but the cumulative effect is a shift in decision authority from a human to a machine.

Surveillance systems play a central role in this process. As Derek Gregory has argued in his work on contemporary warfare, persistent surveillance does not simply improve targeting accuracy. It expands the production of targets by increasing the volume of observable and classifiable activity. In other words, surveillance does not just identify pre-existing targets; it helps define what becomes a targetable object.

In recent reporting on the use of AI-assisted systems in Gaza, including those described as generating large target pools, this dynamic appears particularly pronounced.

When large populations are continuously surveilled and analysed through behavioural and relational data, the number of “matches” or flagged individuals can scale far beyond what traditional intelligence processes would produce.

This is not simply a question of accuracy. It is a question of throughput. Once the rate of target generation increases significantly, the constraint shifts from identification to processing and action. That change in constraint alters operational behaviour. Systems trained to detect anomalies or correlations will, by design, produce false positives. In low-tempo environments, these can be filtered and reviewed more carefully. In high-tempo conflict environments, the operational incentive is often to act on them, particularly when the system is treated as having pre-screened the space of possibilities.

The effect is that surveillance and automation together can increase the number of entities considered legitimate military objectives, even if the underlying data has not materially changed. These are not edge cases. They are structural properties of how such systems operate when deployed at scale. This is why questions of oversight are not ancillary. They are central to whether these systems can be used responsibly at all.

This brings us to another concern raised by the Google workers in their recent letter to their CEO: classified environments. In these environments, the usual mechanisms of scrutiny are significantly weakened.

External review is absent by definition. Internal auditing is often limited in scope. Feedback from real-world outcomes may not be systematically available to those developing or maintaining the systems. Without such feedback loops, it becomes difficult to evaluate system performance in a meaningful way over time. All AI developers know that a tight feedback loop is essential in order to maintain acceptable system performance on machine learning tasks.

This matters because errors in these systems are not always immediately visible. A misclassification may only be detected if there is a mechanism for post-hoc review and comparison against ground truth. In the absence of such mechanisms, error rates can persist or accumulate without clear visibility to developers or decision-makers. Ultimately, these mistakes or oversights can result in civilian deaths and destruction of vital infrastructure.

There is a recurring argument that participation in military AI development is necessary in order to “shape” its use. This argument generally assumes that developers retain sufficient visibility and influence over deployment contexts to meaningfully constrain misuse. This is not true in classified or opaque operational settings.

These are not abstract governance issues. They are practical limitations on the ability to ensure safe operation. While companies may pursue development and deployment despite these concerns, states have a duty to ensure safeguards. The need for regulation was a key reason why I joined the Stop Killer Robots campaign, which has been calling for a treaty on autonomy in weapons systems for over a decade. This year, at a UN meeting in November, states have the opportunity to launch negotiations. With the growing gap between military AI development and regulation, I hope they do.

When I left Google, I believed that certain lines should not be crossed, particularly where systems may contribute to lethal decision-making without meaningful oversight. What has become clearer since then is that those systems are being deployed to devastating humanitarian effect.

The question is no longer whether the risks are theoretically acceptable. It is whether the available control mechanisms are sufficient in practice to manage them. It is clear that they are not.

Laura Nolan is a software engineer and a member of the Stop Killer Robots campaign. She is also a member of the International Committee for Robort Arms Control. She left her job at Google in 2018 over concerns about Google’s Project Maven contract with the US Department of Defense.